What Are Foundation Models?

AI, But Make It Make Sense’

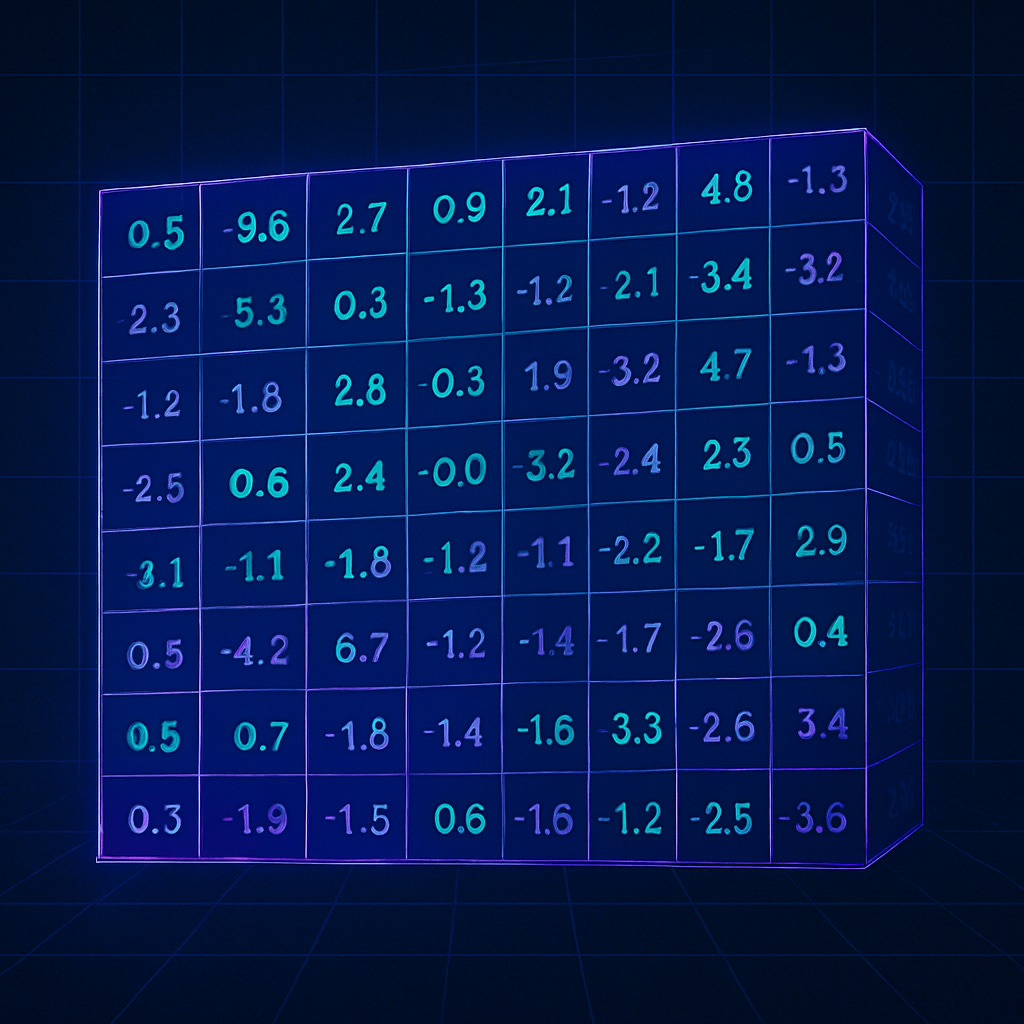

Artificial intelligence didn’t suddenly wake up one morning and become smart. What really changed was how it learns. At the heart of this transformation are foundation models the giant, pre-trained systems that power everything from chatbots to image generators.

They’re called “foundation models” because that’s what they are: the base layer beneath modern AI. If all the apps, assistants, and creative tools we use were buildings, these models would be the concrete slab holding them up unseen by most people, but absolutely essential for everything above them.

Imagine a student who has read almost everything novels, research papers, recipes, code, and instruction manuals. That’s what a foundation model is like. It learns the general patterns of how language, logic, and structure fit together, rather than memorizing individual answers. Once trained, it can be adapted to do all kinds of things writing emails, generating art, answering questions, summarizing documents, even helping write software.

Each new app or AI tool you use is really a customized version of a larger foundation model underneath. Instead of starting from scratch every time, developers take that general-purpose brain and teach it to specialize.

The Old Way vs. The New Way

Before foundation models, AI systems were like individual workers trained for one very narrow job. One handled spam detection, another did translation, another managed chat responses. None of them shared knowledge, and each had to be built from the ground up. It was slow, repetitive, and expensive like hiring and training a new employee for every small task.

Foundation models flipped that idea. Rather than building many small AIs, one enormous model is trained on everything it can read, see, and process and then fine-tuned for different purposes. It’s as if you trained one brilliant generalist and then gave them short courses in law, art, or medicine depending on what they needed to do. That single change building one broad base instead of many narrow ones is what made modern AI both faster and smarter.

Why They Matter

Building one of these models is costly. Training can take weeks or months and require specialized supercomputers. But here’s the twist: once it’s trained, it can be reused endlessly. Adapting it for a new purpose say, medical summaries or marketing copy costs a fraction of the original effort. It’s like paying once to build a supercomputer and then letting everyone plug into it for different projects.

This reuse is what accelerated AI’s growth. Developers no longer have to reinvent the wheel. They can start with a foundation model that already understands the basics of language and reasoning, and quickly fine-tune it for new ideas. That’s why new AI tools seem to appear almost every week the foundation is already in place.

Another benefit is consistency. When improvements are made to a foundation model better understanding of tone, reasoning, or clarity those upgrades automatically make every connected tool smarter too. Each improvement ripples outward, raising the quality of the whole ecosystem.

Different Kinds of Foundation Models

The best-known foundation models are the language models, the ones that power chatbots, writers, and coding assistants. They’re the reason tools like ChatGPT, Claude, and CoPilot can understand and respond in natural language. These systems are trained on vast amounts of text, learning grammar, style, and meaning so well that they can now write, summarize, and explain almost anything in plain English.

Then there are multimodal models, which can handle more than just text. They can process and describe images, interpret charts, or even connect visuals and words together. That’s how tools like DALL·E can turn text into images, or how Gemini can analyze both documents and pictures in a single conversation. It’s a step toward AI that sees and understands the world in multiple dimensions, not just language.

Finally, some models are specialized for certain fields. GitHub’s CoPilot, for example, is tuned for writing computer code. AlphaFold predicts how proteins fold, helping scientists accelerate drug discovery. Legal and medical AIs are being trained to summarize dense documents or flag key details for human experts. These models take general intelligence and refine it into expert-level skill kind of like sending the foundation model to grad school.

Strengths and Weaknesses

Foundation models are incredibly good at spotting patterns, generating ideas, and understanding language. They can summarize pages of text in seconds, write in any style, or help brainstorm creative concepts. They’re versatile and adaptable, able to assist in everything from tutoring students to drafting contracts.

But they also have limits. They don’t actually know facts the way humans do they predict. When they generate answers, they’re choosing the most statistically likely response, not recalling a truth from memory. That’s why they sometimes make confident mistakes or “hallucinate” false information. They’re not trying to deceive; they simply don’t have built-in understanding of what’s real or current.

Foundation models are powerful tools for producing and processing information, but they still need human judgment for verification and context. The combination of human oversight and machine scale is where the real strength lies.

How They’re Used Today

Most people interact with foundation models every day without realizing it. They power chat assistants that summarize, explain, or brainstorm ideas. They write and edit content for marketing, social media, and business reports. They suggest code for developers, design graphics, analyze documents, and even help professionals spot patterns that would take humans weeks to find.

These systems have quietly become part of our digital infrastructure. Just like electricity or cloud computing, they run underneath everything the invisible intelligence layer of modern technology.