AI is Lying to me

How do we build Trust.

You’ve probably seen it happen. You ask an AI a simple question, and it gives a confident, well‑worded answer except it’s completely wrong.

Dates that never happened, facts that don’t exist, even fake studies that sound real.

It’s not lying. It’s not trying to trick you.

It’s just doing exactly what it was built to do: predict what comes next not know what’s true.

Understanding why this happens helps you spot when AI is bluffing and use it wisely without losing trust.

What “Hallucinations” Mean in AI

When people talk about AI hallucinations, they mean moments when the AI says something that sounds believable but isn’t actually true.

It’s not self‑aware. It doesn’t know it’s wrong. It’s simply making predictions based on patterns it’s seen before like an advanced autocomplete.

Imagine asking it,

“When was the first computer invented?”

And it answers,

“The first computer, ENIAC, was invented in 1943 at MIT by Alan Turing to break German codes during World War II.”

That sounds smart but it’s a mash‑up of true pieces arranged in the wrong order. ENIAC was real but built in 1945 at the University of Pennsylvania, not MIT. Alan Turing worked on code‑breaking machines, but not ENIAC.

The AI combined familiar puzzle pieces in a way that looks right but isn’t.

Why AI Makes Things Up

It Predicts It Doesn’t Understand

At its core, AI works like a giant guessing engine. It looks at your question and predicts what word is most likely to come next. If you type “The sky is,” it might predict “blue.” If you type “The first computer was,” it might predict “invented by Alan Turing.” Each prediction is shaped by patterns in the data it learned from not by knowledge of the world.

It doesn’t know anything; it’s just completing sentences.

Its Training Data Isn’t Perfect

AI learns from human writing websites, articles, books, and other text. That means it absorbs everything we’ve ever said online: the good, the bad, and the wildly inaccurate.

If ten websites repeat a common myth, AI assumes that’s a strong pattern. So it confidently repeats it, too. The more something is said online even if it’s wrong the more “true” it feels to a pattern‑based system.

It Sounds Confident by Design

AI isn’t trying to mislead you; it just mirrors how people write. Most writing online is confident and polished few of us type “I think” or “maybe.” So the AI learns that tone and copies it.

The result?

It sounds sure of itself even when it’s just making an educated guess.

The Different Kinds of AI Hallucinations

Not every made‑up answer is the same. AI can go wrong in a few familiar ways:

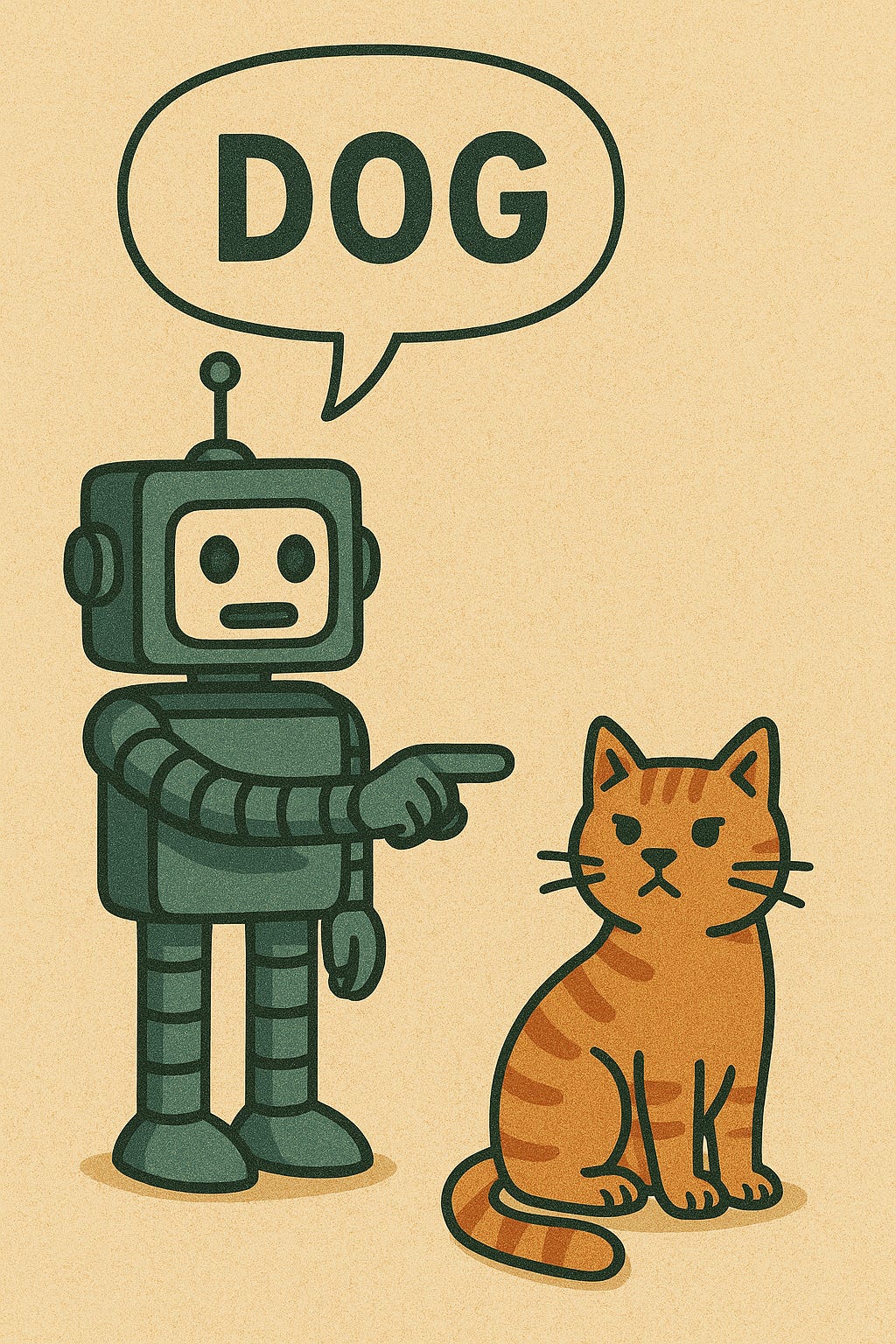

Factual Hallucinations

Getting the details wrong like dates, names, or places.

“The Great Wall of China is visible from the moon.” (A popular myth but not true.)

Source Hallucinations

Citing things that don’t exist made‑up articles, books, or studies.

“According to a 2018 Harvard study…” (that was never written anywhere.)

Logical Hallucinations

Drawing the wrong conclusion from real facts.

“Ice cream causes shark attacks.”

They’re both common in summer, but one doesn’t cause the other.

Context Hallucinations

Losing track of what’s already been said in a conversation contradicting itself or ignoring earlier details.

Real‑World Consequences

Sometimes hallucinations are harmless a funny line or wrong trivia fact.

But in serious settings, they can cause real problems.

In healthcare, AI might invent symptoms or cite fake studies.

In law, it might misquote a ruling or misstate a regulation.

In finance, it could summarize trends that don’t exist.

The more confident the tone, the easier it is to believe and that’s what makes it risky.

How to Spot When AI Might Be Making Things Up

A few clues can help you catch it before it spreads:

Too specific: Oddly precise numbers, dates, or quotes with no source.

Too perfect: Neat, oversimplified explanations that ignore complexity.

Too confident: No hesitation, qualifiers, or mention of uncertainty.

If it sounds flawless, double‑check it real knowledge is usually a little messy.

Can AI Stop Hallucinating?

Developers are working on it, but it’s tricky. Since the problem comes from how AI learns predicting patterns rather than storing facts it’s not easy to fix completely.

Progress is happening, though:

New models can cross‑check their answers with verified databases.

Some connect to live web searches for up‑to‑date facts.

Others can say, “I’m not sure” when confidence is low.

And many companies now use human reviewers to catch and correct false outputs before they reach users.

How to Use AI Safely and Smartly

The best way to use AI is to treat it like a creative collaborator, not a fact‑checker.

It’s great for:

Brainstorming ideas

Drafting emails or articles

Summarizing documents

Generating outlines or examples

But for anything factual or high‑stakes, always verify. Check sources. Look things up. Ask for citations and confirm they exist. Use your judgment that’s the one thing AI doesn’t have.